Yapeng Meng is a third-year Ph.D. candidate at the Center of Brain Inspired Computing Research (CBICR), Department of Precision Instrument, Tsinghua University, under the supervision of Prof. Luping Shi and Prof. Rong Zhao.

He is a member of the Tianmouc brain-inspired vision sensor team. His research interests include low-level vision, generative models, and sim-to-real data generation. He aims to leverage a complementary multi-pathway visual paradigm to enable intelligent and robust visual perception for open-world sensing.

Before joining Tsinghua, he received his B.Eng. in School of Astronautics, Beihang University (BUAA), where he conducted research as an undergraduate intern in the laboratories of Prof. Shenwei Shi and Prof. Zhengxia Zou.

Warning

Problem: The current name of your GitHub Pages repository ("Solution: Please consider renaming the repository to "

http://".

However, if the current repository name is intended, you can ignore this message by removing "{% include widgets/debug_repo_name.html %}" in index.html.

Action required

Problem: The current root path of this site is "baseurl ("_config.yml.

Solution: Please set the

baseurl in _config.yml to "Education

-

Tsinghua UniversityCenter of Brain Inspired Computing Research

Tsinghua UniversityCenter of Brain Inspired Computing Research

Department of Precision Instrument

Ph.D. StudentSep. 2023 - present -

Beihang UniversityB.Eng. in School of Astronautics

Beihang UniversityB.Eng. in School of Astronautics

B.Sc. in Mathematics and Applied Mathematics

Minor in Engineering ManagementSep. 2019 - Jul. 2023

Honors & Awards

-

Comprehensive Excellence Scholarship, Tsinghua University2025

-

Outstanding Student Cadre, Tsinghua University2024

-

Scholarship for Future Scholars, Tsinghua University2023

-

Outstanding Graduate of Beijing2023

-

Shen Yuan Medal (Top 10 College Students), Beihang University2022

-

Baosteel Scholarship2022

-

National Scholarship2021

-

National Scholarship2020

Selected Publications (view all )

Spatio-Temporal Difference Guided Motion Deblurring with the Complementary Vision Sensor

Yapeng Meng*, Lin Yang*, Yuguo Chen, Xiangru Chen, Taoyi Wang, Lijian Wang, Zheyu Yang, Yihan Lin#, Rong Zhao# (* equal contribution, # corresponding author)

Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) 2026

Motion blur arises when rapid scene changes occur during the exposure period, collapsing rich intra-exposure motion into a single RGB frame. Without explicit structural or temporal cues, RGB-only deblurring is highly ill-posed and often fails under extreme motion. Inspired by the human visual system, neuromorphic sensors introduce temporally dense information to alleviate this problem; however, event cameras still suffer from event rate saturation under rapid motion, while the event modality entangles edge features and motion cues, which limits their effectiveness. As a recent breakthrough, the complementary vision sensor (CVS) captures synchronized RGB frames together with high-frame-rate, multi-bit spatial difference (SD, encoding structural edges) and temporal difference (TD, encoding motion cues) data within a single RGB exposure, offering a promising solution for RGB deblurring under extreme dynamic scenes. To fully leverage these complementary modalities, we propose Spatio-Temporal Difference Guided Deblur Net (STGDNet), which adopts a recurrent multi-branch architecture that iteratively encodes and fuses SD and TD sequences to restore structure and color details lost in blurry RGB inputs. Our method outperforms current RGB or event-based approaches in both synthetic CVS dataset and real-world evaluations. Moreover, STGDNet exhibits strong generalization capability across over 100 extreme real-world scenarios.

Spatio-Temporal Difference Guided Motion Deblurring with the Complementary Vision Sensor

Yapeng Meng*, Lin Yang*, Yuguo Chen, Xiangru Chen, Taoyi Wang, Lijian Wang, Zheyu Yang, Yihan Lin#, Rong Zhao# (* equal contribution, # corresponding author)

Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) 2026

Motion blur arises when rapid scene changes occur during the exposure period, collapsing rich intra-exposure motion into a single RGB frame. Without explicit structural or temporal cues, RGB-only deblurring is highly ill-posed and often fails under extreme motion. Inspired by the human visual system, neuromorphic sensors introduce temporally dense information to alleviate this problem; however, event cameras still suffer from event rate saturation under rapid motion, while the event modality entangles edge features and motion cues, which limits their effectiveness. As a recent breakthrough, the complementary vision sensor (CVS) captures synchronized RGB frames together with high-frame-rate, multi-bit spatial difference (SD, encoding structural edges) and temporal difference (TD, encoding motion cues) data within a single RGB exposure, offering a promising solution for RGB deblurring under extreme dynamic scenes. To fully leverage these complementary modalities, we propose Spatio-Temporal Difference Guided Deblur Net (STGDNet), which adopts a recurrent multi-branch architecture that iteratively encodes and fuses SD and TD sequences to restore structure and color details lost in blurry RGB inputs. Our method outperforms current RGB or event-based approaches in both synthetic CVS dataset and real-world evaluations. Moreover, STGDNet exhibits strong generalization capability across over 100 extreme real-world scenarios.

Diffusion-Based Extreme High-speed Scenes Reconstruction with the Complementary Vision Sensor

Yapeng Meng*, Yihan Lin*, Taoyi Wang, Yuguo Chen, Lijian Wang, Rong Zhao (* equal contribution)

Proceedings of the IEEE/CVF international conference on computer vision (ICCV) 2025

Recording and reconstructing high-speed scenes poses a significant challenge. While high-speed cameras can capture fine temporal details, their extremely high bandwidth demands make continuous recording unsustainable. Conversely, traditional RGB cameras, typically operating at 30 FPS, rely on frame interpolation to synthesize high-speed motion, often introducing artifacts and motion blur. Human visual system inspired sensors, like event cameras, offer high-speed sparse temporal or spatial variation data, partially alleviating these issues. However, existing methods still suffer from RGB blur, temporal aliasing, and loss of event information. To overcome these challenges, we leverage a novel complementary vision sensor, Tianmouc, which outputs high-speed, multi-bit, sparse spatio-temporal difference information with RGB frames. Building on this unique sensing modality, we introduce a Cascaded Bi-directional Recurrent Diffusion Model (CBRDM) that achieves accurate, sharp, color-rich video frames reconstruction. Our method outperforms state-of-the-art RGB interpolation algorithms in quantitative evaluations and surpasses eventbased methods in real-world comparisons.

Diffusion-Based Extreme High-speed Scenes Reconstruction with the Complementary Vision Sensor

Yapeng Meng*, Yihan Lin*, Taoyi Wang, Yuguo Chen, Lijian Wang, Rong Zhao (* equal contribution)

Proceedings of the IEEE/CVF international conference on computer vision (ICCV) 2025

Recording and reconstructing high-speed scenes poses a significant challenge. While high-speed cameras can capture fine temporal details, their extremely high bandwidth demands make continuous recording unsustainable. Conversely, traditional RGB cameras, typically operating at 30 FPS, rely on frame interpolation to synthesize high-speed motion, often introducing artifacts and motion blur. Human visual system inspired sensors, like event cameras, offer high-speed sparse temporal or spatial variation data, partially alleviating these issues. However, existing methods still suffer from RGB blur, temporal aliasing, and loss of event information. To overcome these challenges, we leverage a novel complementary vision sensor, Tianmouc, which outputs high-speed, multi-bit, sparse spatio-temporal difference information with RGB frames. Building on this unique sensing modality, we introduce a Cascaded Bi-directional Recurrent Diffusion Model (CBRDM) that achieves accurate, sharp, color-rich video frames reconstruction. Our method outperforms state-of-the-art RGB interpolation algorithms in quantitative evaluations and surpasses eventbased methods in real-world comparisons.

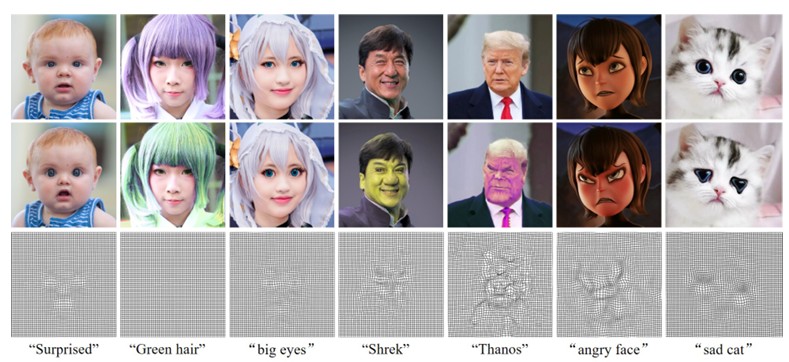

Text-driven Physically Interpretable Face Editing

Songru Yang*, Yapeng Meng*, Zhenwei Shi, Zhengxia Zou (* equal contribution)

IEEE International Conference on Multimedia and Expo Workshops (ICMEW) 2025

This paper proposes a novel and physically interpretable method for face editing with arbitrary text prompts. Different from previous GAN-inversion editing methods that manipulate its latent space or diffusion methods conduct manipulation as a reverse process, we regard the face editing process as imposing vector flow fields on face images, representing the offset of spatial coordinates and color for each pixel. Under this paradigm, we represent the vector flow field in two ways: 1) explicitly represent the flow vectors with rasterized tensors, and 2) implicitly parameterize the flow vectors as continuous, smooth, and resolution-agnostic neural fields. The flow vectors are iteratively optimized under the guidance of the pre-trained CLIP model by maximizing the correlation between the edited image and the text prompt. We also propose a learning-based one-shot face editing framework, which is fast and adaptable to any text prompt input. Compared with SOTA text-driven face editing methods, our method can generate physically interpretable face editing results with high identity consistency and image quality.

Text-driven Physically Interpretable Face Editing

Songru Yang*, Yapeng Meng*, Zhenwei Shi, Zhengxia Zou (* equal contribution)

IEEE International Conference on Multimedia and Expo Workshops (ICMEW) 2025

This paper proposes a novel and physically interpretable method for face editing with arbitrary text prompts. Different from previous GAN-inversion editing methods that manipulate its latent space or diffusion methods conduct manipulation as a reverse process, we regard the face editing process as imposing vector flow fields on face images, representing the offset of spatial coordinates and color for each pixel. Under this paradigm, we represent the vector flow field in two ways: 1) explicitly represent the flow vectors with rasterized tensors, and 2) implicitly parameterize the flow vectors as continuous, smooth, and resolution-agnostic neural fields. The flow vectors are iteratively optimized under the guidance of the pre-trained CLIP model by maximizing the correlation between the edited image and the text prompt. We also propose a learning-based one-shot face editing framework, which is fast and adaptable to any text prompt input. Compared with SOTA text-driven face editing methods, our method can generate physically interpretable face editing results with high identity consistency and image quality.

Text-guided 3D face synthesis-from generation to editing

Yunjie Wu*, Yapeng Meng*, Zhipeng Hu*, Lincheng Li, Haoqian Wu, Kun Zhou, Weiwei Xu, Xin Yu (* equal contribution)

Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) 2024

Text-guided 3D face synthesis has achieved remarkable results by leveraging text-to-image (T2I) diffusion models. However most existing works focus solely on the direct generation ignoring the editing restricting them from synthesizing customized 3D faces through iterative adjustments. In this paper we propose a unified text-guided framework from face generation to editing. In the generation stage we propose a geometry-texture decoupled generation to mitigate the loss of geometric details caused by coupling. Besides decoupling enables us to utilize the generated geometry as a condition for texture generation yielding highly geometry-texture aligned results. We further employ a fine-tuned texture diffusion model to enhance texture quality in both RGB and YUV space. In the editing stage we first employ a pre-trained diffusion model to update facial geometry or texture based on the texts. To enable sequential editing we introduce a UV domain consistency preservation regularization preventing unintentional changes to irrelevant facial attributes. Besides we propose a self-guided consistency weight strategy to improve editing efficacy while preserving consistency. Through comprehensive experiments we showcase our method's superiority in face synthesis.

Text-guided 3D face synthesis-from generation to editing

Yunjie Wu*, Yapeng Meng*, Zhipeng Hu*, Lincheng Li, Haoqian Wu, Kun Zhou, Weiwei Xu, Xin Yu (* equal contribution)

Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) 2024

Text-guided 3D face synthesis has achieved remarkable results by leveraging text-to-image (T2I) diffusion models. However most existing works focus solely on the direct generation ignoring the editing restricting them from synthesizing customized 3D faces through iterative adjustments. In this paper we propose a unified text-guided framework from face generation to editing. In the generation stage we propose a geometry-texture decoupled generation to mitigate the loss of geometric details caused by coupling. Besides decoupling enables us to utilize the generated geometry as a condition for texture generation yielding highly geometry-texture aligned results. We further employ a fine-tuned texture diffusion model to enhance texture quality in both RGB and YUV space. In the editing stage we first employ a pre-trained diffusion model to update facial geometry or texture based on the texts. To enable sequential editing we introduce a UV domain consistency preservation regularization preventing unintentional changes to irrelevant facial attributes. Besides we propose a self-guided consistency weight strategy to improve editing efficacy while preserving consistency. Through comprehensive experiments we showcase our method's superiority in face synthesis.

Large-factor super-resolution of remote sensing images with spectra-guided generative adversarial networks

Yapeng Meng, Wenyuan Li, Sen Lei, Zhengxia Zou, Zhenwei Shi

IEEE Transactions on Geoscience and Remote Sensing (TGRS) 2022

Large-factor image super-resolution (SR) is a challenging task due to the high uncertainty and incompleteness of the missing details to be recovered. In remote sensing images, the subpixel spectral mixing and semantic ambiguity of ground objects make this task even more challenging. In this article, we propose a novel method for large-factor SR of remote sensing images named spectra-guided generative adversarial networks (SpecGANs). In response to the above problems, we explore whether introducing additional hyperspectral images (HSIs) to GAN as conditional input can be the key to solving the problems. Different from previous approaches that mainly focus on improving the feature representation of a single source input, we propose a dual-branch network architecture to effectively fuse low-resolution (LR) red, green, blue (RGB) images and corresponding HSIs, which fully exploit the rich hyperspectral information as conditional semantic guidance. Due to the spectral specificity of ground objects, the semantic accuracy of the generated images is guaranteed. To further improve the visual fidelity of the generated output, we also introduce the Latent Code Bank with rich visual priors under a generative adversarial training framework so that high-resolution, detailed, and realistic images can be progressively generated. Extensive experiments show the superiority of our method over the state-of-art image SR methods in terms of both quantitative evaluation metrics and visual quality. Ablation experiments also suggest the necessity of adding spectral information and the effectiveness of our designed fusion module. To our best knowledge, we are the first to achieve up to 32x SR of remote sensing images with high visual fidelity under the premise of accurate ground object semantics.

Large-factor super-resolution of remote sensing images with spectra-guided generative adversarial networks

Yapeng Meng, Wenyuan Li, Sen Lei, Zhengxia Zou, Zhenwei Shi

IEEE Transactions on Geoscience and Remote Sensing (TGRS) 2022

Large-factor image super-resolution (SR) is a challenging task due to the high uncertainty and incompleteness of the missing details to be recovered. In remote sensing images, the subpixel spectral mixing and semantic ambiguity of ground objects make this task even more challenging. In this article, we propose a novel method for large-factor SR of remote sensing images named spectra-guided generative adversarial networks (SpecGANs). In response to the above problems, we explore whether introducing additional hyperspectral images (HSIs) to GAN as conditional input can be the key to solving the problems. Different from previous approaches that mainly focus on improving the feature representation of a single source input, we propose a dual-branch network architecture to effectively fuse low-resolution (LR) red, green, blue (RGB) images and corresponding HSIs, which fully exploit the rich hyperspectral information as conditional semantic guidance. Due to the spectral specificity of ground objects, the semantic accuracy of the generated images is guaranteed. To further improve the visual fidelity of the generated output, we also introduce the Latent Code Bank with rich visual priors under a generative adversarial training framework so that high-resolution, detailed, and realistic images can be progressively generated. Extensive experiments show the superiority of our method over the state-of-art image SR methods in terms of both quantitative evaluation metrics and visual quality. Ablation experiments also suggest the necessity of adding spectral information and the effectiveness of our designed fusion module. To our best knowledge, we are the first to achieve up to 32x SR of remote sensing images with high visual fidelity under the premise of accurate ground object semantics.